Six months ago, we launched Learning Commons to scale proven teaching and learning practices to benefit every learner. We are focused on developing open infrastructure to improve the quality of AI-powered tools in education, from datasets grounded in learning science to systems that help evaluate how well tools actually support teaching and learning.

Since then, we have further developed our tools in close partnership with researchers, educators, and developers. At the ASU+GSV Summit, we announced early access to two new Literacy Evaluators: Subject Matter Knowledge and Conventionality.

- The Subject Matter Knowledge Evaluator identifies the hidden background knowledge a reading passage assumes, so developers can see whether text is truly accessible for a given grade, not just “on-level” by readability. This evaluator enables better prompting by giving developers the information they need to optimize prompts, generate scaffolds, and maintain quality at scale.

- The Conventionality Evaluator analyzes how directly a text communicates its meaning. It identifies whether language is literal and straightforward or layered with figurative expressions, idioms, irony, and implied meaning. Standard readability tools tell you a text’s grade level, but not whether its meaning requires real interpretive work to unpack. This evaluator fills that gap by rating a text’s conventionality complexity, identifying the specific language features driving it, and offering actionable suggestions for scaffolding or instruction so developers can make intentional choices about the texts generated rather than discovering a problem after the fact.

Building better tools starts with better foundations. Our new Subject Matter Knowledge and Conventionality Evaluators are designed to close the gap between surface-level readability and real comprehension. When developers can see not just what a text says, but what it demands of a reader, they can build with far greater intentionality and impact for educators and learners.

Sandra Liu Huang, president of Learning Commons

What’s next

Our work is guided by a belief that shared foundations make it easier to build, evaluate, and improve tools in ways that are transparent, evidence-based, and grounded in how students learn. We are pleased that this vision is resonating with the field. Since September, our tools and resources have been downloaded more than 8,000 times.

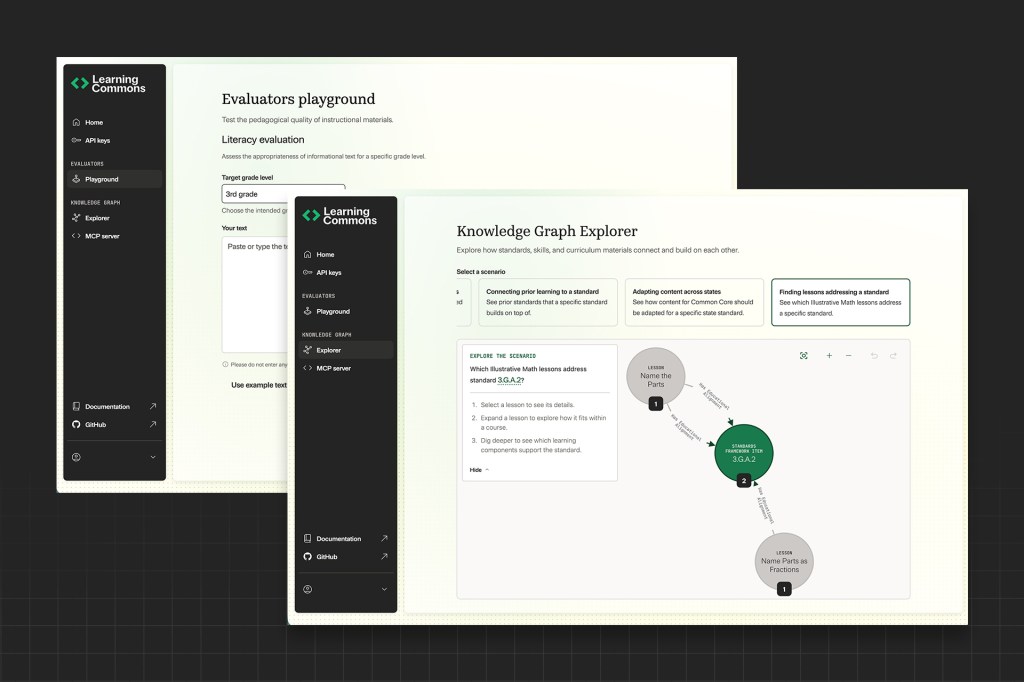

This summer, we will launch a platform that is designed to lower barriers for those who want to build and evaluate AI-powered education tools responsibly. Starting today, developers can get early access to the platform, which brings together API endpoints, MCP tools, and SDKs. Developers can now discover, explore, and start building with our tools within their existing workflows. We’re excited to offer a Knowledge Graph Explorer and an Evaluator Playground.

- The Knowledge Graph Explorer allows builders to view the structure and content of our datasets in Knowledge Graph; no prior technical knowledge required. The Knowledge Graph Explorer makes it easy to explore datasets visually, so you can quickly build a mental model of how learning concepts, standards, and relationships are structured before writing code or integrating.

- The Evaluator Playground lets developers experiment in real time, test ideas, and see what works. You can run an evaluator against a piece of text and immediately see how the output changes. The Playground is an interactive, browser-based environment for evaluating text passages for student instruction in real time. No local setup is required.

We’re excited to provide early access to the platform and invite developers, researchers, and partners to build with us alongside a broader ecosystem investing in shared infrastructure for the long term. This summer, Learning Commons will also further expand access, introducing additional features, integrations, and licensing options to support adoption at scale.

We are also welcoming Laurence Holt to the Learning Commons Advisory Board. Holt joins experts from a range of fields who are helping guide our efforts to develop open, shared AI resources for K–12 education. He is a senior advisor at XQ Institute and Teaching Lab, and a partner in the Coherence Fund. Holt was previously chief product officer of Amplify, where he oversaw the design and development of products used by millions of K-12 students and teachers.

A shared investment in open infrastructure

In parallel with our product development, Learning Commons is supporting the K–12 AI Infrastructure Program led by Digital Promise. Over four years, the program will fund the development of openly shared datasets, models, benchmarks, and other digital public goods that anyone can freely use, adapt, and build upon to help close the gap between learning science and the capabilities of generative AI.

Rather than funding isolated tools, the program is designed to strengthen the field’s shared foundations, creating greater consistency, rigor, and accountability across it.