The problem: AI content quality was inconsistent

Artificial intelligence has moved fast in education. Teachers now have access to tools that can generate lesson plans, reading passages, and instructional materials in seconds. But speed alone doesn’t guarantee quality, and for years, quality control in AI-generated educational content has been, at best, informal.

Teachers are often left making gut calls. Is this passage appropriate for a third grader? Is the vocabulary too advanced? Do students have the background knowledge they need for comprehension? There is no structured, evidence-backed framework to answer those questions. The default workflow is “generate and hope.”

This is both inefficient and a meaningful risk to instructional quality. AI tools frequently produce verbose, complex text that lands well above the intended grade level. And teachers don’t have time to edit the output themselves. The result? Students get lost and teachers lose trust in the tools.

Eduaide, founded by former public school teachers who recognized the gap between learning science research and actual classroom practice, saw an opportunity to change that.

The solution: Grade Level Appropriateness Evaluator built for the classroom

Eduaide partnered with Learning Commons to embed a pedagogical evaluator directly into their product. The result was the Grade Level Appropriateness (GLA) Evaluator, now natively integrated into Eduaide’s product.

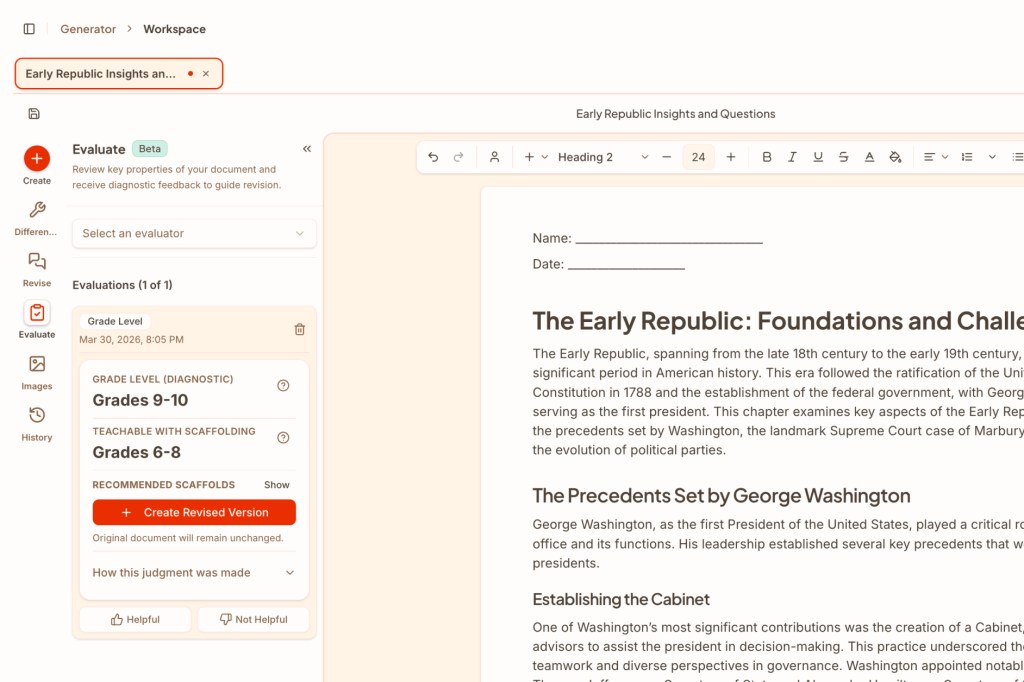

Learning Commons provides infrastructure built for AI edtech companies to assess content quality in their internal pipelines. The evaluator lives inside Eduaide’s Notion-style editor, keeping it low-friction and embedded within an existing teacher workflow. When a teacher runs a passage through it, the evaluator generates:

- A target grade band for independent reading

- An alternative grade band for use with instructional support

- Specific scaffolding suggestions — for example, vocabulary terms to pre-teach

- A plain-language explanation of the reasoning behind the result

Critically, teachers can apply scaffolding suggestions with a single click, instantly generating a revised version of the resource. Using the evaluator produces a result and it also enables action. For the first time, teachers have direct control over validating AI outputs.

Under the hood, the evaluator combines quantitative readability analysis (Flesch-Kincaid) with qualitative assessment of language demands, text structure, and required background knowledge. The rubrics are anchored in learning science and validated with leading pedagogical organizations including Student Achievement Partners, CAST, and Achievement Network (ANet).

The surprise benefit: teachers found a new use case

Early user interviews surfaced something unexpected. Teachers weren’t only running AI-generated content through the evaluator, they were also uploading their own human-created materials to check quality. The tool had become something broader: a way to improve existing instructional assets, not just an AI guardrail.

Teachers described the evaluator as a “transparency mechanism.” For the first time, they had something concrete to act on rather than simply accepting or rejecting AI output. That shift — from passive recipient to active evaluator — is meaningfully changing how educators engage with AI tools.

The workflow has moved from “generate and hope” to “generate, evaluate, and refine.”

Early evidence of impact

Thanks to our partnership with Learning Commons, we are able to take a form of evaluation technology typically used internally by AI edtech companies and put it in educators’ hands.

Thomas Thompson, Co-Founder and CEO @ Eduaide.Ai

Eduaide serves ~100 school district partners, and the GLA Evaluator has already cleared a meaningful internal bar: Eduaide’s own team uses it when developing and shipping new tools. That kind of internal adoption is a strong proof point.

Early anecdotal evidence from teacher feedback points to real improvements in content quality. Eduaide and Learning Commons are partnering together to track teacher behavior change, specifically, whether teachers rerun or edit content after seeing evaluator results, as well as content quality improvements over time and feedback loops.

The broader question Eduaide is beginning to explore: are teachers becoming better at instructional planning as a result of using these tools? The hypothesis is that consistent, structured feedback on content quality doesn’t just improve individual outputs but also builds pedagogical expertise over time.

Why it matters

As teacher disillusionment with overpromising AI tools continues to grow, Eduaide’s evidence-based approach is a genuine differentiator. By partnering with Learning Commons to bring infrastructure-grade evaluation into the classroom, Eduaide has made AI more collaborative, giving teachers more control and confidence in their tools.

The GLA Evaluator is an early example of what it looks like when learning science, not just language models, shapes teachers’ practice. And increasingly, that foundation is what trust in AI for education will be built on.